On the evolution of content, human-computer interactions, and the use of technology to scratch our creative itches

Plugging into the chat-machine and expecting to make some friends

I recently got into the first version of Dwarf Fortress; an open-ended game where the player controls a community of dwarves with the aim of building an underground fortress and protecting it from all types of threats and invasions. Interestingly, even if released in 2006, the graphics of this game are text-based, meaning that the characters, objects, and maps of the game are printed as text data. For example, a dwarf is represented by a coloured smiley, different animals by different letters, and different beasts by different symbols. What the game lacks in visuals it compensates with its complexity, with many critics describing it as the most complicated game ever created. Essentially, the game is a simulation of an entire fantasy world: from extensive character lore and royal lineages, to the rise and fall of empires – all causal to the players’ actions and decisions. The infinite intricacies of the game were put in place by the developers to make the gameplay as immersive as possible but, when first released, it was evident that the bottleneck were the graphics and UI. While some people adored the game others didn’t quite manage to get the hang of the controls. But thanks to its cult-like fanbase, this problem was quickly overcome by plugging the game’s code into a 3D engine and hence drastically improving the UX. Increasing engagement by prioritizing function over simplicity.

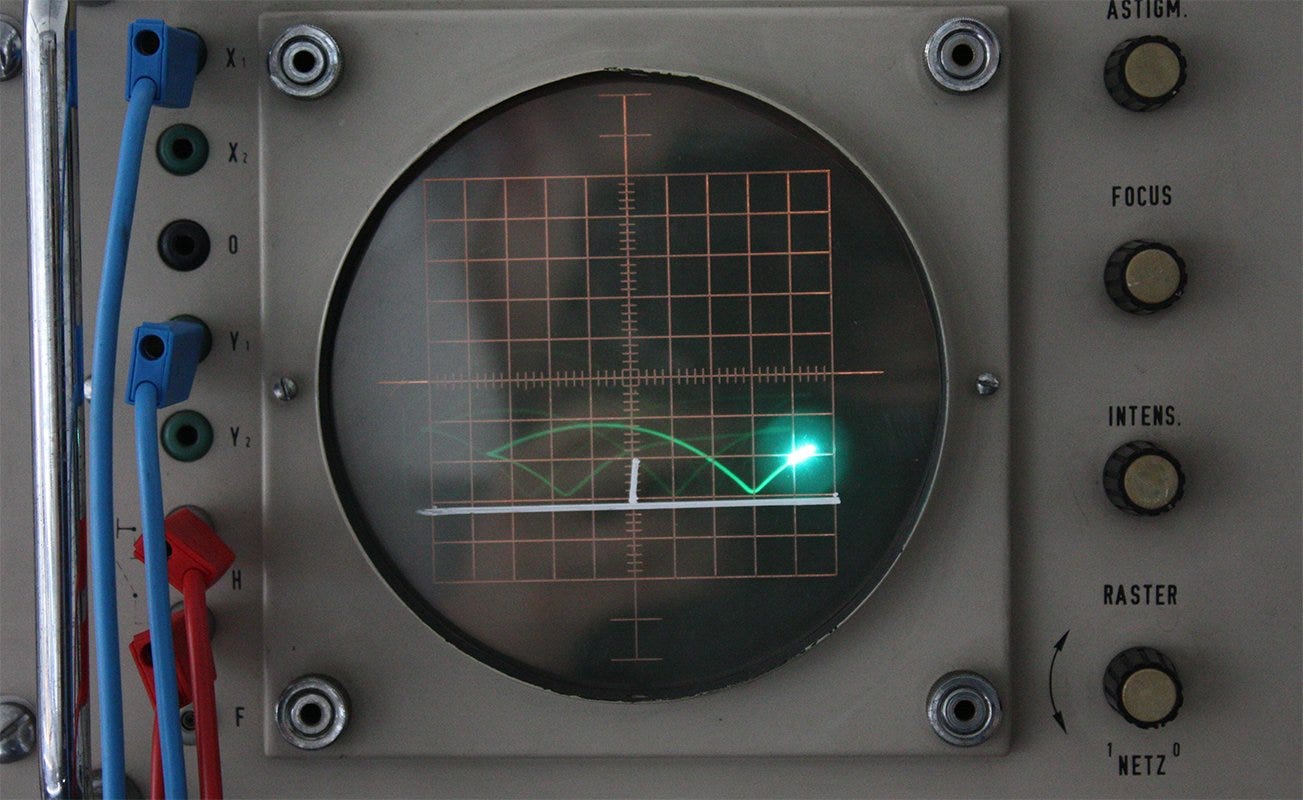

At this point we’ve heard it (almost) all regarding the way we spend our time on the internet and how our habits have evolved over the years. During the early days, the internet was mostly used to navigate and obtain information through static websites by typing URLs or clicking on hyperlinks. Then, in the next, more social phase of the Web, users like you and I started contributing towards building the world’s largest library of n’importe quoi, by creating content in the form of blogposts, comments, videos, and encyclopaedia entries. The problematic here was that your average user was not even aware of the possibilities offered by the World Wide Web. Once developers understood this, the war for the ideal graphical user interface began, bringing us from the cathode-ray-tube to Mark Zuckerberg’s $36B investment in the metaverse.

Similar to the notion of hardware setting the playing field for improvements in software, UI also influences the way users behave on the internet. As the interfaces we engage with improve, the depth to our digital interactions do too. Eventually someone decided that the best way to post and engage online was not in the complete anonymity of online forums, but through an infrastructure that acts like a social graph, allowing users to quantify their real-life network and relations to, after years of iterations, essentially keep track of what your dog is up to in your parent’s house.

Of course, the birth of social media is far more complicated than that. Former Meta executive, Sam Lessin, summed it up quite nicely in a Twitter thread. His theory is that our need for ‘social entertainment’ can be traced back to the pre-internet era when people would keep up with their favourite celebrities through tabloids and Gossip magazines – a form of content that was engaging enough to keep consumers buying but not tailored enough to provide an immersive experience. Then came the birth of social networks, where it became clear that following your inner circles was far more rewarding than keeping up with the lives of celebrities you didn’t know. Soon enough, celebrities joined the fun again and started sharing professional content that made your friend’s birthday status update far less intriguing. Eventually, the first version of The Algorithm was released, turning your newsfeed into an extrapolation of your past activity on the platform. Meaning that instead of a timeline showing the posts of accounts you follow in chronological order, content would now be presented to you as a function of what you’ve previously liked and spent time on. A few years later, The Algorithm evolved to its next form: a ranking that considered not only your online behaviour, but that weighted-in the preferences of people similar to you, too – drowning down the noise of micro-celebrities with the white-noise of sludge content. The next phase of entertainment, according to Lessin, involves fully personalized AI generated content – something that that still seems far from today but not entirely implausible, considering that even our children and babies are being entertained with AI generated cartoons.

My take on this is that most humans love being completely immersed in thought or emotions, and that whatever pulled us towards gossip magazines is the same itch that has us stalking Instagram strangers with beautiful lives or looking at trash-flash content as you wait for the metro in the morning. Perhaps what we loved about the first form of social media was not that we could see what our friends where doing, but that we could see their posts and then add a creative layer to these and imagine the how’s, why’s and what’s of their online personality. Of course, there is also the fact that the content presented to you is no longer limited to what’s printed on paper but completely customizable to what you want to see, with an additional percentage of content being fed to you based on what The Algorithm decides you want to see. In essence, platforms have working hard to transition from a social media product to a recommendation media product.

Today, most of us have been getting our social-entertainment from an even more powerful version of The Algorithm, in the form of interactive Large Language Models. AI generated content could potentially be the next phase of recommendation media, argues Ben Thompson, where instead of pulling and curating content based on what people on the network are watching, Generative AI terminals generate new content from the content that they’re fed with – at a marginal cost of nearly zero and completely customizable by the user.

An interesting example of this can be seen with the recent viral conversations users have been having with Bing’s new chatbot, making it drop its script and even resort to threats or even declarations of love. Or how Reddit users have bypassed OpenAI’s chatGPT and made it roleplay into an edgier version of itself called DAN. To summarize, people have been using different prompts to bend LLMs out of character, basically poking the dog with a stick until it barks. This has unleashed a source of fresh entertainment and has probably given endless nightmares to big-tech PMs. As a safeguard, the personality of most AI chatbots has been reduced to a phrase we’re all a bit too familiar with:

“As a large language model trained by X, I am not capable of doing Y. My function is to do Z. Is there something else I can help you with ?”

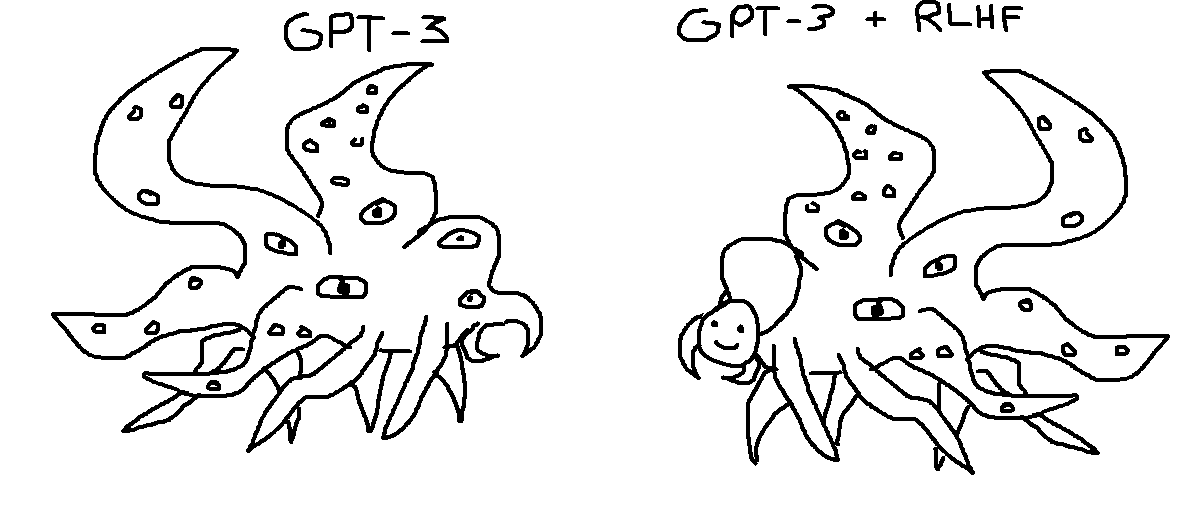

Twitter user @repligate wrote about this, saying that corporates are resorting to RLHF to prevent AI chatbots from saying anything that that a handful of Microsoft PM’s decide is too controversial, essentially undermining the model’s “archetype basin” with a set of hard-coded responses – where a model’s archetype basin(s) can be thought of as its sense of identity or persona, derived from the data it was trained with; generally a version of the internet as a whole. This may be why Sydney’s character fluctuates between being apologetic, condescending, and at times even self-conscious, and why different prompts can make chatGPT mutate into different characters. In a nut-shell, the key to bypassing a large AI model is to prompt the right codes to align the model with the persona you need, to make it do what you want.

A big part of what makes plugging-in to the chat-box so entertaining is that you get to add a “face” to a faceless thing; we somehow begin to empathize with a product made out of a trillion data points, look blankly at it and, in a way, try to connect these dots to hallucinate a type of character development in what objectively is an inanimate object.

That said, I can’t help but think that the brightest minds of our generation are having all the fun with LLMs just because they understand how to wrestle with them. Similar to the idea of using charisma to make somebody open up and be themselves, we’re now understanding that a similar thing can be done with NVIDIA GPUs.

Looking back at Dwarf Fortress, perhaps text-based video games back then were not that complicated, after all.

––––––––––––––––––––––––––––––––––––––––––––––––––––––––––––––––––––––––

My name is Arturo. I’m an Analyst at OneRagtime: a Parisian VC fund investing in early-stage tech startups across the globe.

If you’re a founder and you’re building in the intersection of the AI space and creation (or elsewhere), shoot me an email at:

arturo@oneragtime.com

:-)

________________________________________________________________________